Transcription

See Plugins > WhisperRealtime > Sample > BP > Transcript > BP_WhisperTranscriptRealtime for a sample implementation.

You can test it in sample map located at Plugins > WhisperRealtime > Sample > Map > test_Transcript.

Step-by-step tutorial

Basic setup

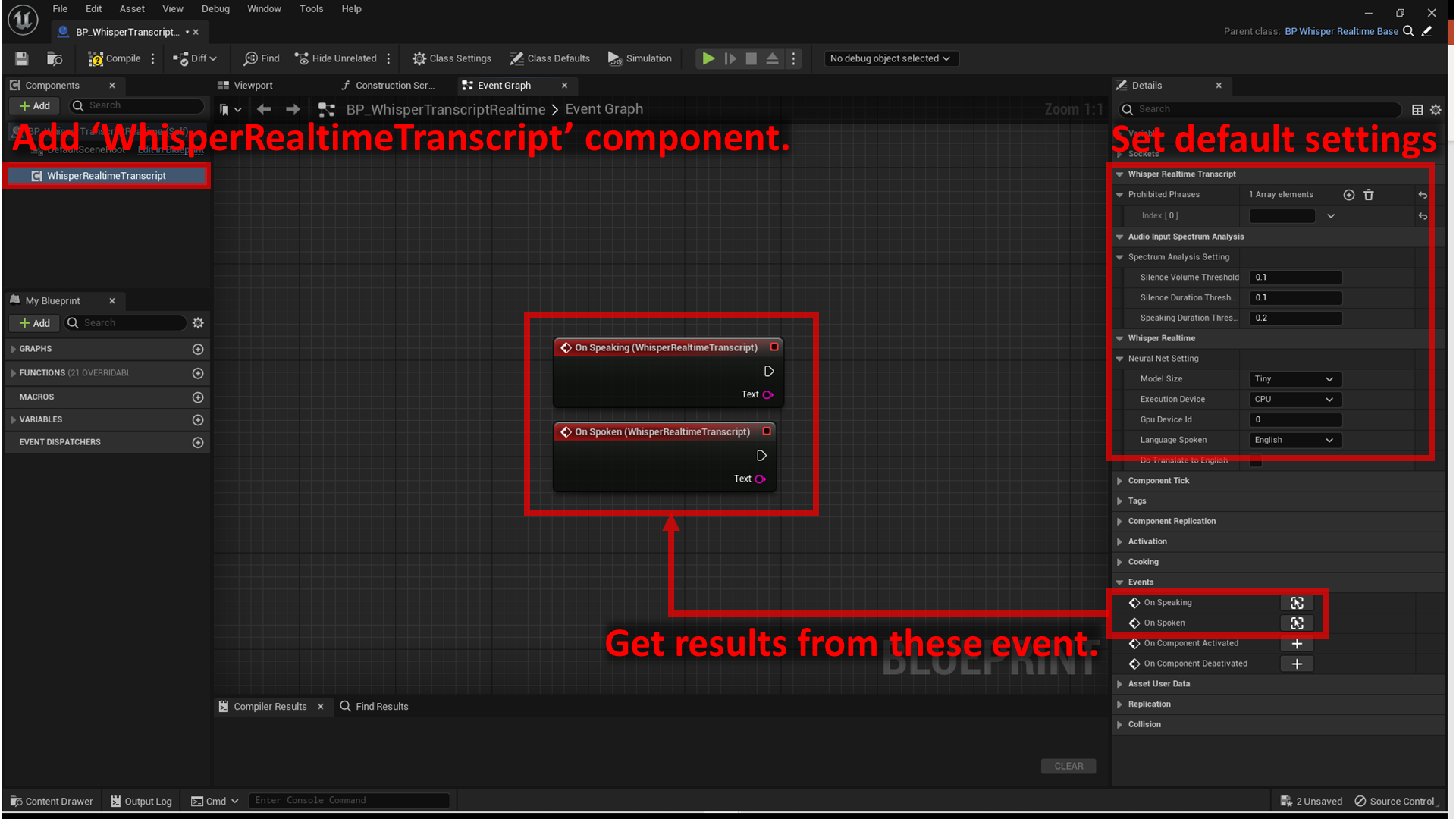

- Create an actor blueprint.

- Add

Whisper Realtime Transcriptcomponent. -

Set the default Neural Net settings:

-

Specify

Model Size. The larger the model, higher the accuracy and the CPU/GPU/memory usage.Where is Large model?

The official implementation of Whisper offers "Large" as the largest model size, but this plugin does not include the Large model.

This is because it is too large for game use and could not be made to work properly in the plugin developer's environment. -

Specify

Execution device, whether to use CPU or GPU. - Specify

GPU Device IDif you use GPU and you have multiple GPUs in your PC. - Specify

Language spoken. - Specify

Do Translate to English. If you just want to transcribe speech to text in the language specified above, leave unchecked.

-

-

Set the default Audio Input Spectrum Analysis settings:

- Specify

Volume Multiplier, volume multiplier for microphone input. No change if 1.0. This value does not affect the silence/speech determination by the threshold below. - Specify

Silence Volume Threshold, volume threshold for determining silence.

Maximum microphone input is 1.0. Complete silence is 0.0. - Specify

Silence Duration Threshold, time threshold for determining silent state (in seconds).

If the volume belowSilence Volume Thresholdcontinues for this time, a transition is made from the speech state to the silent state. - Specify

Speaking Duration Threshold, Time threshold for determining speaking state (in seconds).

If the volume aboveSilence Volume Thresholdcontinues for this time, a transition is made from the silent state to the speech state.

- Specify

-

Set the default transcript settings:

- Specify

Prohibited Phrasesif you want to suppress certain phrases.- Note that this feature simply removes the specified phrases from the result. If you specify a short phrase, for example

at, the phrase will be removed from all words (e.g.thatbecomesth).

- Note that this feature simply removes the specified phrases from the result. If you specify a short phrase, for example

- Specify

-

Get results from

On Speakingevent andOn Spokenevent.On Speakingevent gives intermidiate result while the user is still speaking.On Spokenevent gives the final result after the user stops speaking.

Warning

Note that the end of the

On Speakingresult tends to be incorrect because the speech is abruptly cut off in the middle of the speech.Info

The values for Audio Input Spectrum Analysis settings are used to determine user is speaking or not.

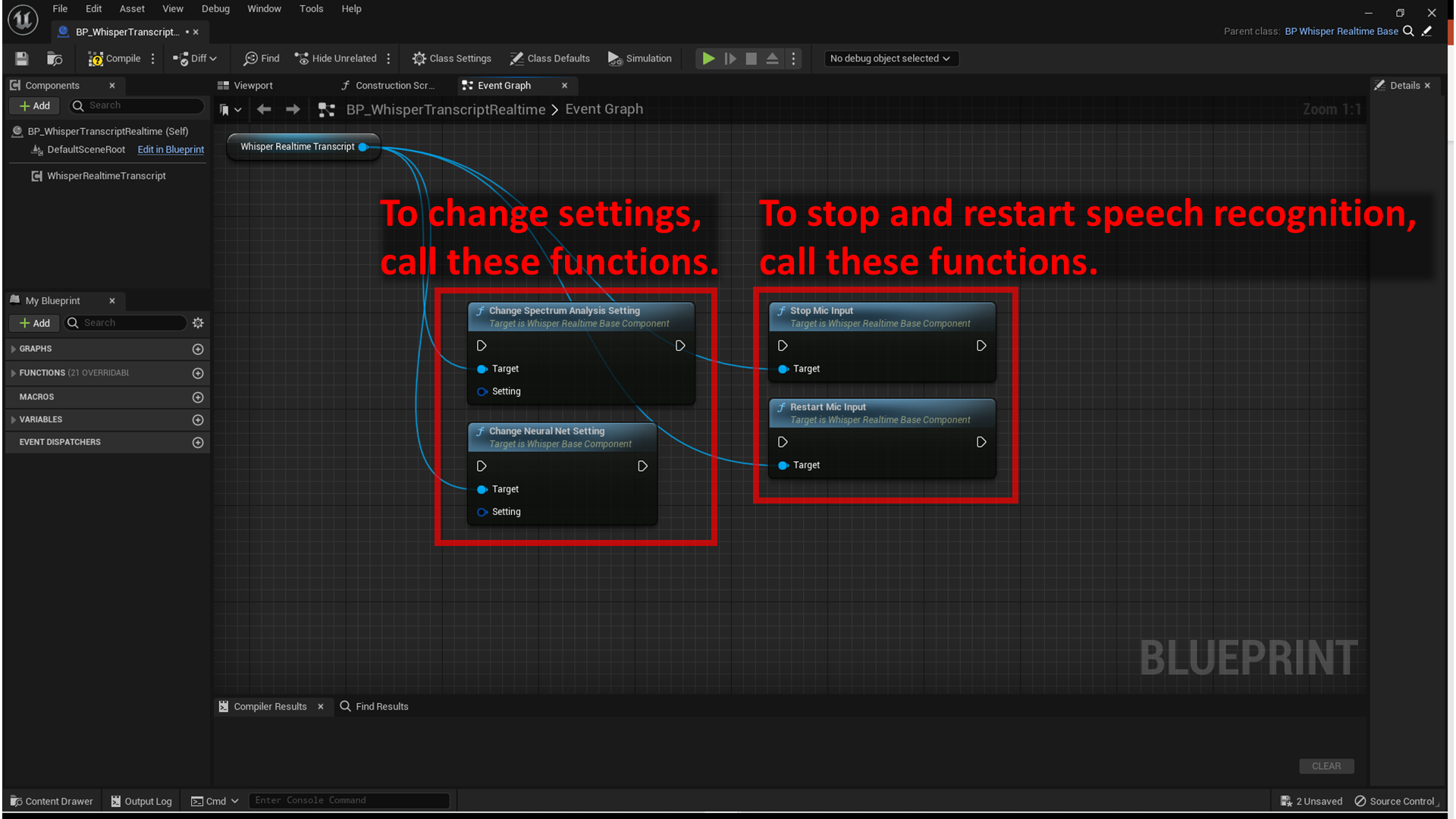

Change settings

- To change Audio Input Spectrum Analysis settings, call

Change Spectrum Analysis Settingfunction. -

To change Neural Net settings, call

Change Neural Net Settingfunction.

Stop and restart

- To stop transcription or translation, call

Stop Mic Inputfunction. - To restart transcription or translation, call

Restart Mic Inputfunction.